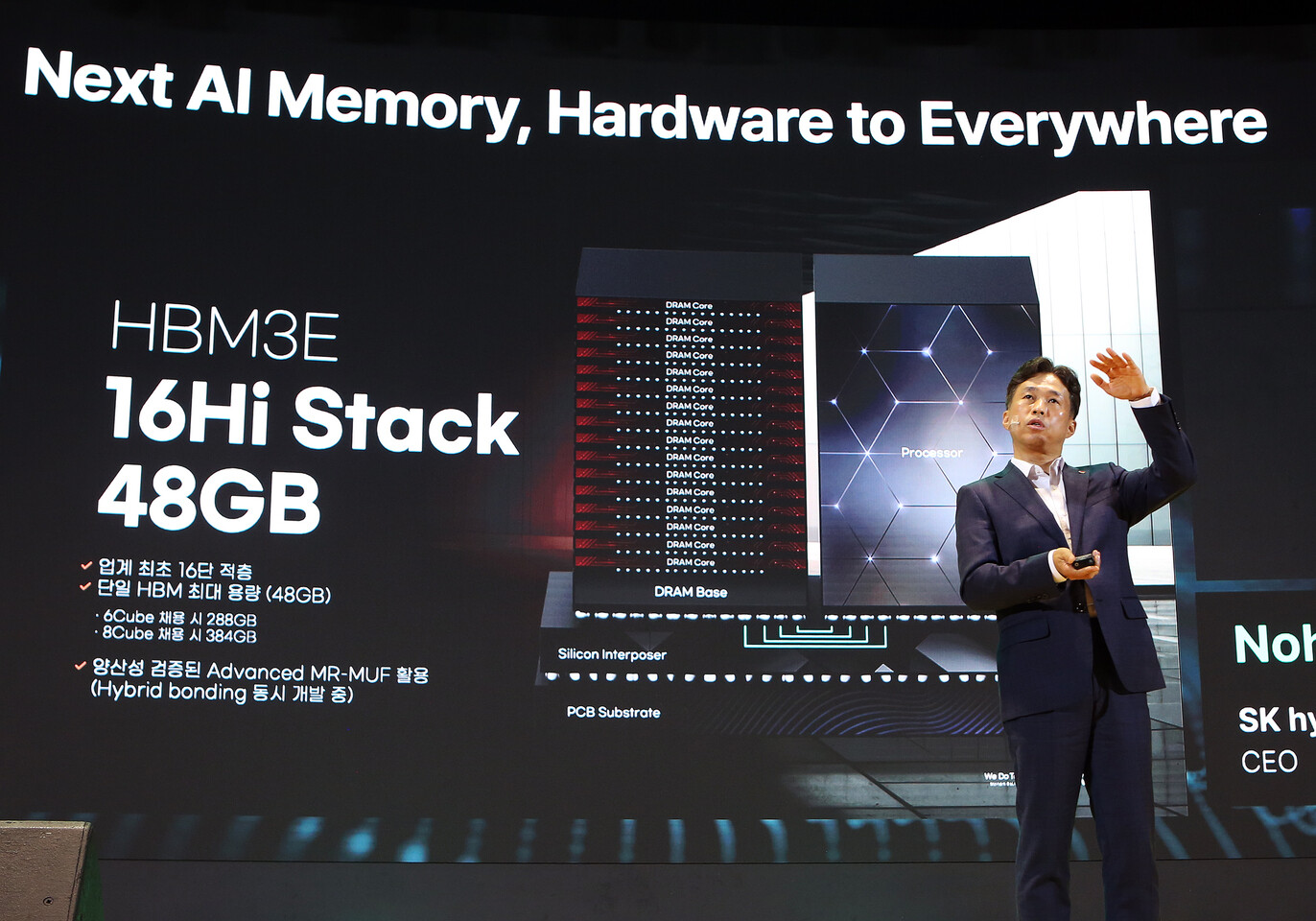

At the SK AI Summit 2024,SK hynixCEO took the stage and revealed the industry’s first 16-Hi HBM3E memory - beating both Samsung and Micron to the punch. With the development of HBM4 going strong, SK hynix prepared a 16-layer version of its HBM3E offerings to ensure “technological stability” and aims to offer samples as early as next year.

A few weeks ago, SK hynix unveiled a12-Hi variantof its HBM3E memory - securing contracts from AMD (MI325X) and Nvidia (Blackwell Ultra). Raking inrecord profitslast quarter, SK hynix is in full steam once again as the giant has just announced a 16-layer upgrade to its HBM3E lineup, boasting capacities of 48GB (3GB per individual die) per stack. This increase in density now allows AI accelerators to feature up to 384GB of HBM3E memory in an 8-stack configuration.

SK hynix claims an 18% improvement in training alongside a 32% boost in inference performance. Like its 12-Hi counterpart, the new 16-Hi HBM3E memory incorporates packaging technologies like MR-MUF which connects chips by melting the solder between them. SK hynix expects 16-Hi HBM3E samples to be ready by early 2025. However, this memory could be shortlived as Nvidia’s next-genRubinchips are slated for mass production later next year and will be based on HBM4.

That’s not all as the company is actively working on PCIe 6.0 SSDs, high-capacity QLC (Quad Level Cell) eSSDs aimed at AI servers, and UFS 5.0 for mobile devices. In addition, to power future laptops and even handhelds, SK hynix is developing an LPCAMM2 module and soldered LPDDR5/6 memory using its 1cnm-node. There isn’t any mention of CAMM2 modules for desktops, so PC folk will need to wait - at least until CAMM2 adoption matures.

To overcome what SK hynix calls a “memory wall”, the memory maker is developing solutions such as Processing Near Memory (PNM), Processing in Memory (PIM), and Computational Storage.Samsunghas already demoed its version of PIM - wherein data is processed within the memory so that data doesn’t have to move to an external processor.

HBM4 will double the channel width from 1024 bits to 2048 bits while supporting upwards of 16 vertically stacked DRAM dies (16-Hi) - each packing up to 4GB of memory. Those are some monumental upgrades, generation on generation, and should be ample to fulfill the high memory demands of upcoming AI GPUs.

Samsung’sHBM4tape-out is set to advance later this year. On the flip side,reportssuggest that SK hynix already achieved its tape-out phase back in October. Following a traditional silicon development lifecycle, expect Nvidia and AMD to receive qualification samples by Q1/Q2 next year.

Get Tom’s Hardware’s best news and in-depth reviews, straight to your inbox.

TheSK AI Summit 2024is being held at COEX Convention Center in Seoul November 4-5. The event is the largest AI symposium in Korea, the company claimed.

Hassam Nasir is a die-hard hardware enthusiast with years of experience as a tech editor and writer, focusing on detailed CPU comparisons and general hardware news. When he’s not working, you’ll find him bending tubes for his ever-evolving custom water-loop gaming rig or benchmarking the latest CPUs and GPUs just for fun.